|

|

Self-supervised Video Object Segmentation by Motion Grouping

Charig Yang,

Hala Lamdouar,

Erika Lu,

Weidi Xie,

Andrew Zisserman

Full: ICCV, 2021

Short: CVPR Workshop on Robust Video Scene Understanding, 2021

(Best Paper Award)

@InProceedings{yang2021selfsupervised,

title={Self-supervised Video Object Segmentation by Motion Grouping},

author={Charig Yang and Hala Lamdouar and Erika Lu and Andrew Zisserman and Weidi Xie},

booktitle={ICCV},

year={2021},

}

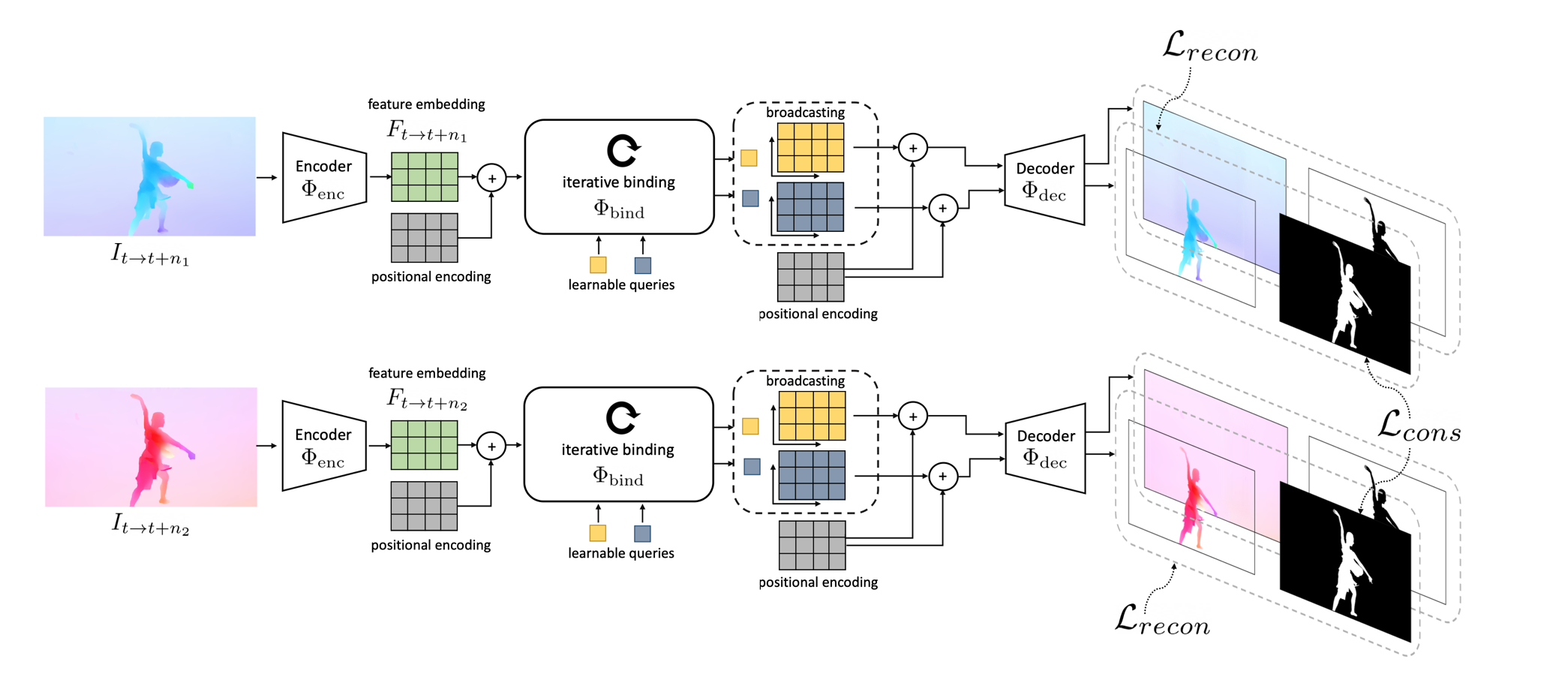

Animals have evolved highly functional visual systems to understand motion, assisting perception even under

complex environments. In this paper, we work towards developing a computer vision system able to segment

objects by exploiting motion cues, i.e. motion segmentation. We make the following contributions: First,

we introduce a simple variant of the Transformer to segment optical flow frames into primary objects

and the background. Second, we train the architecture in a self-supervised manner, i.e. without using

any manual annotations. Third, we analyze several critical components of our method and conduct thorough

ablation studies to validate their necessity. Fourth, we evaluate the proposed architecture on public benchmarks

(DAVIS2016, SegTrackv2, and FBMS59). Despite using only optical flow as input, our approach achieves superior results

compared to previous state-of-the-art self-supervised methods, while being an order of magnitude faster.

We additionally evaluate on a challenging camouflage dataset (MoCA), significantly outperforming the other self-supervised

approaches, and comparing favourably to the top supervised approach, highlighting the importance of motion cues, and the

potential bias towards visual appearance in existing video segmentation models.

|